PDF : here.

Abstract

This paper examines the impact of “Web 2.0” technologies, design standards and information retrieval trends on the traditional library and staff. Web 2.0 is defined and an overview of its popular proliferation as a means of information retrieval is considered. The function, utility and nature of the librarian are discussed in contrast to these novel schemes of accessing, storing and classifying information. Lastly, the popular web 2.0 encyclopedia Wikipedia is examined in order to demonstrate how these technologies and design trends are fundamentally changing the way the end-user seeks information. Accordingly, we professionals must adapt or lose our jobs, as our traditional duties are increasingly becoming anachronistic.

The Web 2.0 Paradigm: Impacts on Library Science Methodology and Professionalism

Introduction

Buzzwords have a tendency to muddle arguments, confuse and to obfuscate the true meaning and implications of change. Web 2.0 is a buzzword, and as we will see is often defined using ambiguous and unspecific terminology. Nevertheless the components and trends underlying Web 2.0 have come to revolutionize the ways in which the end-user accesses information systems, so that the greater body of users has by today rejected the old ways and begun to exclusively rely on these novel portals. In this sense Web 2.0, clearly identified or not, is a paradigm which is rapidly replacing top-down systems of classification and information retrieval. While theoretically this should not have great impact on the field of library science, in practice it has over the course of just a few years depopulated our libraries and called into question the very necessity of our jobs, as the end-user is now bypassing our guidance altogether and accessing information directly through slickly designed and interactive Web 2.0 information portals. In other words: the basic and traditional functions of libraries are being threatened. Some within our discipline are prone to argue that this is inevitable and that we should shift focus to minimizing the so-called “digital divide” and so become teachers and engineers of technology and information retrieval. Yet this is but a temporary task which does not inform the long term viability of our profession; it presupposes a sustained demand for our services which will not necessarily exist in the future.

This paper aims to clarify the muddle surrounding the buzzword “Web 2.0” by succinctly defining it and considering the historical context of its usage. Furthermore, it will be demonstrated that the majority of end-users prefer design motifs and trends inherent to Web 2.0. As Web 2.0 is not simply a buzzword, and has radically influenced the way the end-user accesses information, we shall then consider the implications for library science and perhaps more alarmingly: Â the nature and function of a librarian. Lastly Wikipedia will be considered as an example of how a Web 2.0 library (or more broadly: information retrieval system) functions, with a particular emphasis on disinformation and the benefits of utilizing such technologies.

Web 2.0 examined

Definition

While the term Web 2.0 has been floating around for five years prior to 2004, it first garnered serious visibility and buzz during that year. At O’Reilly Media’s Web 2.0 conference John Batelle and Tim O’Reilly presented a developed scheme for examining the new standards of information access which by then were only in their infancy.[1] O’Reilly and Batelle claimed that Web 2.0 would see the internet as a platform for development, in contrast to the computer application, and that free collaboration and content generation among the end-user base would replace the information control and exclusivity of “Web 1.0.” The authors contrasted two sets of programs to demonstrate this shift in practice: Netscape/Google and Encyclopedia Britannica/Wikipedia.

Netscape offered a web browser and hoped to control the means by which the end-user accessed the internet, directing them by means of a “webtop” to information providers which had purchased Netscape servers. In this fashion corporatized exclusive content was immediate to the user information retrieval experience. Google alternatively offered a service, rather than a desktop program, based upon organizing and presenting web pages via database managment. While Netscape was prone to closed, scheduled releases, Google’s services are in a state of “perpetual beta.” Google serves as a dynamic engine for information retrieval, in contrast to the controlled, deliberately designed world of Netscape and traditional library classification systems. The contrast between the Encyclopedia Britannica and Wikipedia is even more obvious: while the former offered a limited number of articles written by experts, updated occasionally with new content, Wikipedia is an encyclopedia based upon the Web 2.0 concept known as “radical trust.” Radical trust relies upon the anonymous end-user to create, monitor and organize content. Quality control is entrusted to the effective work of “Linus’ Law:” given enough exposure, problems and imperfections will be worked out by the end-user base (“the wisdom of crowds”), resulting in a product greater than as envisioned by a single author. [2]

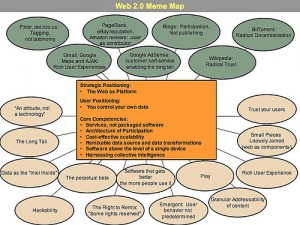

Figure 1. Web 2.0 diagram of related ideas. Courtesy: Tim O’Reilly.

Features

Paul Miller of Talis summarized the basic qualities of Web 2.0 as follows.[3] Web 2.0 is a push for: the freeing of data, the building of virtual applications, participation, design around the end-user, modularity, ease of sharing, communication, remixing, intelligence in design, long tail design, and trust. Data is being freed, “allowing it to be exposed, discovered and manipulated in a variety of ways distinct from the purpose of the application originally used to gain access.”[4] In this sense areas of information which were once exclusive to governments, powerful corporate entities and ivy league academic institutions is being data mined and offered freely or at greatly reduced expense to the greater end-user population. As an example: while in the past physical space was once a limitation on the amount of information a library could hold, Web 2.0 technology has made prominent and streamlined digital information systems so that they are prolific, opening up access to a virtually unlimited amount of information to those with basic internet access.[5] I would also like to add to Miller’s criteria here by also speaking to the capacity of Web 2.0’s impetus for “radical decentralization.” This ability can be observed most clearly in BitTorrent, a file sharing software in which users share data in a swarm of peers; there are no central servers to house the data. Accordingly content can be shared quicker and more effectively, as no one individual server can be bogged down due to saturated bandwidth or deny access on the event of its malfunction. In information retrieval radical decentralization is a methodology for maintaining data systems ranging from websites to collection databases and involves standards of collaborative authorship and ownership similar to Wikipedia.[6]

In the same way collaborative work on virtual applications is resulting in products which otherwise could not been developed internally. Google Maps is a case in point, a collaboration between various software developers which resulted in a product starkly superior to alternatives, buttressed by end-user contributions and offered freely to the public.[7]

While Web 1.0 tended to involve content flowing from provider to end-user, Web 2.0 is modeled upon participation. As of 2004 up to 44% of American internet users created or shared content online ranging from personal thoughts to files.[8] The percentage of users doing so today is undoubtedly even higher (with the popularity of services such as Twitter and Flickr), although data is not available. Users prefer and flourish under systems in which they can contribute, and prefer personalization over the sterile and impersonal portals of the past. Web 2.0 systems allow for users to generate, police and sort their own content, through cloud tags, folksonomies, and other democratic design motifs.

Web 2.0 systems are designed around the end-user, to meet the user’s needs. This is contrast to previous practices in which the mode of access, the style and the tempo of service was determined by service owners. Miller uses the example of having access to all flight information going from one location to another, not just from one airline, airline group or travel agent. In this sense Web 2.0 standards imply open access to information, a notion fundamentally averse to Netscape’s business model of yesteryear.

Web 2.0 applications are modular in the sense that they can be easily interchanged with other applications to produce customized experiences and new collaborative projects. Here in the open source community has reigned in recent years: dominating the software landscape by replacing corporate alternatives. The Mozilla project is the most visible example: Firefox and Thunderbird present an increasingly evident threat to Internet Explorer and Outlook Express. Google’s Gmail service and Chrome browser likewise are chipping away at the once monolithic user base of Microsoft products.

Web 2.0 is about sharing “code, content, ideas.” While this superficially implies an end to profit, as we have seen this is not the case. Firms behind Google, Twitter and Amazon rely on user contributed content to populate their systems, but they profit from the sum of the parts. While Wikipedia is a volunteer project, and as such cannot be considered a firm in the strictest sense, it has over the years had no problem receiving funding through end-user donations. This has allowed for rapid expansion and innovation. In this way Web 2.0 offers the best of both worlds: firms profiting and the end-user gaining a valuable service for free or at greatly reduced expense.

Another aspect of the paradigm is open and seamless communication and facilitation amongst end-users. While Miller reminds us that this was one of the original aims of the Web in general, only Web 2.0 has been instrumental in finally bringing the whole of internet users together. Web 2.0 services offer interaction with other end-users, and the inevitable consequence is content creation.

Web 2.0 services also have a capability of remixing. This refers mainly to syndication, the ability to take exactly what the end-user requires from a source and re-implementing it into his or her content and/or information retrieval experience. RSS feeds are a prominent Web 2.0 technology which utilizes this capacity. Plaintext lists can be streamed from already published and formatted content and then inserted and merged with other feeds and content seamlessly. This technology is behind our Web 2.0 news portals.[9]

Web 2.0 services are intelligent, able to collect information about end-users to heuristically redirect them to relevant content and affiliates. Amazon offers recommendations for products based upon a user’s access history and does so with astonishing accuracy and relevancy. Web 2.0 services guide the user to make wise decisions about what information to retrieve in the same way librarians did in the past and they do so by the use of dynamically informed, personalized scripts.

Web 2.0 buttresses, embraces and facilitates “Long Tail” business models. In the words of Miller: “[embracing Long Tail makes] it increasingly cost-effective to service the interests of large numbers of relatively small groups of individuals, and to enable them to benefit from key pieces of the platform while fulfilling their own needs.” In practice Long Tail models allow for the sale and distribution of small numbers of unique, end-user made content.[10] Websites like CafePress and Zazzle allow end-users to design products for free and then offer the manufacturing and logistics to distribute to clusters of consumers at a modest fee. The end-user has the ability to create, grade and police content.

Lastly and most importantly Web 2.0 systems are based upon trust. A true democracy: dubious articles are spotlighted and isolated by end-user review rather than the expertise of a professional. Interoperability, the tendency of various end-users and firms to work collaboratively, is only possible when the various parties believe they are not being scammed. Well designed Web 2.0 services facilitate trust and cooperation amongst the end-user and provide automated systems to respond to problems. Users who consistently contribute valued, reliable content are positively reviewed by other end-users and so become “authorities.” As we will see, this is one of the most criticized aspects of Web 2.0, but this is not to say it has slowed popular interest and embracing of such a system.

How are these trends actualized into projects? The following chart, courtesy of Tim O’Reilly, helps to map out the ways in which Web 2.0 has fundamentally changed the way information is accessed and stored across the internet:[11]

|

Web 1.0 |

|

Web 2.0 |

|

DoubleClick |

–> |

Google AdSense |

|

Ofoto |

–> |

Flickr |

|

Akamai |

–> |

BitTorrent |

|

mp3.com |

–> |

Napster |

|

Britannica Online |

–> |

Wikipedia |

|

personal websites |

–> |

blogging |

|

evite |

–> |

upcoming.org and EVDB |

|

domain name speculation |

–> |

search engine optimization |

|

page views |

–> |

cost per click |

|

screen scraping |

–> |

web services |

|

publishing |

–> |

participation |

|

content management systems |

–> |

wikis |

|

directories (taxonomy) |

–> |

tagging (“folksonomy”) |

|

stickiness |

–> |

syndication |

Figure 2. Web 1.0 and Web 2.0 comparative services. Note the underlying themes behind the Web 2.0 services (see: Miller). Courtesy: Tim O’Reilly.

Criticisms

Tim Berners-Lee has derided Web 2.0 as being nothing more than jargon, the buzzing rhetoric of those ignorant to the original aims and functions of the Web.[12] In this sense critics argue that many of the Web 2.0’s touted novelties are nothing but cosmetically sharp reiterations of Web 1.0 technologies, citing the example of Amazon.com preceding the supposed Web 2.0 revolution by up to a decade.[13] Many of the virtues of Web 2.0 are said to originate in much earlier computer-supported collaborative learning and computer-supported cooperative work software.

A particularly scathing debunking and disinformation effort was made by the online peer-reviewed journal First Monday in their March 2008 issue which was entirely dedicated to dispelling Web 2.0’s popular affections, calling Web 2.0 a product of techno-utopianist rhetoric and market hype.[14] Perhaps more alarmingly The Economist claimed in 2005 that Web 2.0 could facilitate another internet bubble and subsequent burst, which they not-so-ironically entitled “Bubble 2.0,” as firms were prone simply to reattempt failed objectives without sustainable business models.[15] There is also the claim that Web 2.0 end-user collaboration leads to content which is mediocre, dangerous or unreliable when compared to professionally crafted equivalents, a sort of lowest common denominator authorship standard.[16] Of particular criticism is Wikipedia, which is often attacked for its lack of rigor and authenticity not only by critics but by many casual readers with academic credentials. We shall examine and debunk those claims later.

While many of the technologies, design motifs and trends expressed previously are not novel creations of the Web 2.0 paradigm, Web 2.0 offers a culture of interconnection amongst them, creating a final product that is multi-faceted and offers a superior degree of usability and user friendliness. In this sense Web 2.0 can be considered to be not only a prominent collection of emerging and streamlined technologies all focused around empowering the end-user to create and share information, but also a spirit, attitude or state of mind behind design decisions.

Web 2.0 as it applies to Library Science

One might read the preceding sections of this paper and come to the conclusion that library science and internet culture exist as two exclusive domains, and that the tumultuous changes from Web 1.0 to Web 2.0 would only have a minimal effect on the nature and function of libraries. This observation however can be no further from the truth: Web 2.0 has fundamentally changed the way users retrieve information, and accordingly changed the ways in which they perceive and utilize library resources.

Consider one facet of information retrieval: journals. With the introduction of online journals and plaintext databases, the printing of journals has vastly declined.[17] This is of no surprise, considering that since 1999 the online journal has replaced the print journal as a desired means of accessing the information contained within even when both alternatives are offered, with one minority exception being academic faculty, who still slightly prefer print journals.[18] The Web 2.0 technologies behind the online plaintext databases, not to mention the various individual subscriptions, package subscriptions and aggregators, is preferred over physical plant access and loaning.

Statistics indicate a preference for online information retrieval. Between 2002 and 2004 alone the average number of transactions at American libraries declined 2.2%.[19] The University of California Library system saw a decline of 54% in circulation through the 1990s to 2000s of 8,377,000 to 3,832,00 books.[20] Libraries have attempted to cope with these changes by digitizing their collections and contributing to OPAC, but as usage continues to decline so does funding. Cornell University, facing budget cuts to library funding, recently sold off 95,000 print duplicates to Tsinghua University in Beijing. Doing so freed up space, stabilized the funding crisis and expanded access to digital collections.[21] Other libraries have redistributed their resources to become completely digital in hopes of cutting losses, doing away with their physical collections entirely.[22] In many ways the days of print appear numbered, a situation only exacerbated by the looming economic crisis which falls the hardest on educational institutions. Libraries are finding a compromise between losing their collections entirely or keeping them and cutting other portions of the budget: digitizing. The future of our discipline may involve adopting the interactive technologies and “findability” (to mime Morville) of a Wikipedia with the wisdom and professionalism of librarianship.

One 2006 study indicated that 70% of college freshmen, 60% of sophomores, 72% of juniors 63% of seniors and 75% of graduate students conducted a remote search (Google, Wikipedia etc) rather than go to a physical place (library) or ask a person for assistance in answering a research question. Subsequent attempts at information retrieval were even less likely to involve a visit to a library with the exception of graduate students: while 19% preferred a library as their first place to go for information retrieval, 21% preferred a library for a subsequent attempt.[23] A 2007 study indicated that only 2% of students surveyed visited a library for research, although 23% did visit a library website.[24] Other resources filling the gap included asking professors, consulting course materials, using Wikipedia and search engines. These trends are even more pronounced when referring to the general end-user population not involved in academia, who tend to be outdated, negative or confusing perceptions of the “library,” ignorant to the vast electronic and multimedia resources many libraries now offer. [25] This demonstrates a general disenchantment and uncertainty about using library resources, as well as a general disinterest in visiting physical plants. The convenience of online research prevails over whatever expertise could be offered locally as it lies outside of the realm of perception.

While libraries had in previous decades met the local needs of the community with excellence, today they struggle to adapt to our new Web 2.0 world. Herein is a story of many libraries across the nation, who waylaid by the lightning tempo of technological trends, must offer new services on increasingly limited budgets, and adapt traditional methodologies to new norms or else lose their utility altogether.[26] It is not enough in our day, as we will see, to perform the legacy functions of a library: house and maintain physical collections of records. These anachronistic institutions are dissolving, changing their fundamental nature or closing their doors to the public. The story of the local library is a story of our times, a reflection on how changing technology has possessed and manacled our actions to novel expectations.[27]

A solution this influx of new data may not be found in librarians, or other professionals, but instead in gamers and voluntary user collaboration. Take the GWAP/ESP game, pet project of computer scientist Luis von Ahn, a simple multiplayer experience in which players have to describe an image using metadata (descriptors) while also matching what the other player picks. This game is behind the recent vast improvement in Google Image Search queries (which, as you may have noticed, now allows you to do all sorts of advanced searches), as the logoi derived from the game play has been imported into the search engine.[28] The task of cataloging millions of images based on verbose descriptors would have proved impossible for a professional team, not to mention economically impractical. Yet, give the users of the internet a fun game where they have to guess what other people are thinking in describing an image, and you can catalog vast amounts of information for free.

Herein I think lies the most compelling prospects for integrating new technologies into libraries. As the digital age has brought an influx of new information to bear, a colossal task for small groups of professional librarians to manage and catalog, we must create a forum or capacity for visitors to join a community and engage in a collaborative work at the benefit of our objectives. This may only be implemented by posing some fundamental questions about the nature, function and “good” of libraries. We cannot envision what technologies to create or adopt if we are mute to the ultimate overarching goals of our enterprise. This might sound as a pedantic point, but under close scrutiny I do not believe that it is when considering the vast wealth of technological resources. As public funding dwindles under economic woes, we must have the wisdom and foresight to pick our projects carefully. Essentially, what is the central mission of the library?

I believe that Wikipedia represents the most exemplary case. Therein we have an institution, staffed completely by volunteers through love of knowledge, who quest to offer the sum of all human knowledge for free. Wikipedia bridges the old school of library (the collection of knowledge) with the new school of technology (collaborative creation). This I believe is key if libraries hope for long-term viability. They must become universal, digitized and creative; creative in the sense that they are bastions of creation. Librarians might serve as professional guides to perceiving and searching for knowledge, a service which Wikipedia cannot offer.[29] We must act as Plato’s guardians, Dante’s Virgil, ensuring the spiritual wellbeing of the visitor as they trudge through the sometimes intimidating and dangerous morass of knowledge. We are the conduits for technology to be used ethically and effectively, and guides for the ignorant to learn in a safe and reliable fashion.

As Rubin points out, libraries have historically acted as patrons of new technologies, most notably for purposes of this discussion being the internet.[30] But simply providing internet access is not enough in this new age of Web 2.0, where creation, personalization and collaboration have infiltrated every aspect of the user experience. Static knowledge is a thing of the past, and will soon only be useful as historical documents or cultural treasures. Libraries have to cope with this or will default as “archives” rather than as places of learning.

A Web 2.0 Library at Work: Wikipedia

Wikipedia is a collaborative web-based encyclopedia which markets itself as being free to edit, reproduce and view. All content, ranging from the controlled vocabulary which founds it to the plain text and images of the articles themselves are all submitted and engineering by volunteer contributors. Wikipedia is the computer age’s most daring and bold project: it is an attempt at making all knowledge free and all claims corroborated. While claims in print are static and unchanging, only revised by subsequent printings or not at all, Wikipedia offers dynamic articles that are continually updated to improve the breadth of knowledge, understanding and the impacts and significance of current events. In this way Wikipedia is leading to the day in which books will become primary sources rather than sources of reliable knowledge, as historical artifacts rather than as sources of firm reference. As Wikipedia is made freely available, free of corporate patrons to appease, it has become a neutral ground to disseminate information to all users of the internet and beyond. ‘Beyond’ in the sense that Wikibooks and other subprojects are aiming to provide quality open source textbooks to those who are not privy to the costly antecedent alternatives.

Wikipedia poses a significant threat to traditional librarianship: in the world of Wiki all users are potential librarians, and while many lack the technical expertise of a master of library and information science, Wikipedia contributors have become expert archivists, catalogers and information retrieval specialists. The fundamental difference between the old guard and Wiki is that the latter does not have a top down hierarchy but a horizontal hierarchy: collaboration and deliberation is used to produce and maintain content, to make executive decisions, in contrast to the professionalism of traditional librarianship. Herein is the pinnacle of web 2.0 genius making a play of classical technology: Wikipedia is the next generation library, putting everything we know about the discipline into question, including the necessity and utility of our jobs. If the majority of users are skilled at information retrieval then of what use is our kind?[31] One could make the argument that we will always serve as wise guardians and gateways to knowledge in the same sense Vergil was to Dante, informing the tools needed to safely and efficiently access and retrieve information. But what will conclude when the day comes in which the human race no longer needs our aid in learning of information systems, when these complex rhythms become basic facets of the rearing process and information retrieval becomes a rudimentary function of human experience? The digital divide is slowly eroding and so surely this latter time will come.[32] Wikipedia is the cause of the appropriate consternation the reader may be feeling.

Yet Wikipedia is not without flaw. A casual user has no means of determining if an article has been vandalized, if critical information has been removed or if claims the article is making are corroborated by sound evidence.[33] Vandalism is possible, although usually reverted by vigilant administrators and automated bots within minutes.[34][35] As edits are potentially anonymous, Wikipedia can be an unreliable tool for information retrieval for politically charged or controversial topics, as slander and trolling is a concern.[36] Other critics argue that Wikipedia’s claims are substantiated by consensus rather than by the rigor of evidence.[37] Yet the majority of these concerns and criticisms are fallacious. Articles which are repeatedly vandalized or covering topics of a charged political nature are locked and carefully scrutinized by dozens of experts.[38] All modifications to Wikipedia articles are listed in a central location which is patrolled by innumerable volunteer administrators on a perpetual basis. Creating nonsense articles, false claims and simply vandalizing content is increasingly difficult as Wikipedia increases in popularity and appeal. Claims without citations are frequently marked up with a “citation needed” tag, and citations must meet an extensive and objective notability and credibility criteria to be included in articles.[39] In 2007 “Wikiscanner” was established to detect corporate infiltration of Wiki content. Policies and procedures were accordingly established to prevent skewed edits and corporate agendas from mangling the neutrality of the encyclopedia.[40] P.D. Magnus (2009) concluded that Wikipedia could be used as a reliable research tool if done so with a toolset of skepticism and awareness of its potentially unreliable nature.[41]

Clarification: Wikipedia as Library

While Wikipedia is by nature an encyclopedia it also shares many similar characteristics to that of a library. Wikisource, a dependant project of Wikipedia, is a repository of public domain primary and secondary documents, while Wikibooks offers free and regularly updated textbooks to the viewing public. The Commons is another dependant project of Wikipedia which serves as a multimedia library, housing hundreds of thousands of images, video and audio clips, and other pieces of data traditionally found in a library media center. In this sense Wikipedia is not simply an encyclopedia of articles informing the user on desired topics of importance (what we might call “meta-knowlege”), but a repository for knowledge itself; Wikipedia has its own collections in the classical sense and the number of contributions is rapidly increasing by the hour.

It must be added that the actual structure and design of the Wikipedia database is in itself a library of some magnificence and of notable internal sophistication. Wikipedia’s record structure is complex and difficult to describe. The most basic cataloging unit is called a “category.” Categories are simply collections of articles organized by topic, which can then be further broken down into subcategories. Both categories and subcategories can be formatted for presentation in a myriad of ways: alphabetically, chronologically, numerically, randomly, or by custom lists tailored to the subject matter. A very anarchic system, Wikipedia’s categories are determined by the editors, and record structure can change seamlessly and dynamically based upon summary edits or through discussion. The category structure is listed at the bottom of any Wikipedia article, and various subcategories can be accessed via hypertext links in order to list all the sorted articles under that particular subcategory alone. How complex the record structure is for a given group of articles is determined by the editors: some articles are sorted at the end of ten or twenty subcategories, while other articles are only vested under one or two subcategories. It is possible to directly retrieve an article through search or by clicking on a hyperlink rather than navigating the various categories and portals. Academic precedents are typically utilized to form as a grammar of cataloging, especially in regard to scientific and medical articles. In this sense records are not cataloged randomly on Wikipedia, even though the system technically allows for disorder.[42]

Web 2.0 as a scheme for library development

While Wikipedia is not flawless it does offer, by virtue of Web 2.0 technology, an exciting new way of accessing information which is preferred by the majority of the end-user population to the traditional services of a library. Coombs (2007), working off the theoretical groundwork of O’Reilly and Miller, proposed a scheme for adapting the pillar concepts of Web 2.0 with a special emphasis on Wiki technology to libraries.[43] These pillars include radical decentralization, small pieces loosely joined, the perpetual beta, remixable content, user as contributor and a rich user experience. Several of these motifs have already been reviewed in the first section of this paper, but here they will be applied in practice to a library’s web presence.

Rapid decentralization is recommended to enable library staff to seamlessly update, expand upon and personalize the library’s web presence. While Web 1.0 models typically involve a highly centralized and static data structure, hindering frequent updates due to stiffness of use, Coombs recommends a Wiki-style content management system in which staffers are able to collaboratively edit website content. Accorded to the shift in technology is also a change in doctrine and command and control: while changes to Web 1.0 websites typically have to be confirmed by an administrator, Coombs argues that this responsibility should be delegated to library staff.

Secondly, Coombs argues that the inflexible designs with their inherent tendency to “silo” data should be deconstructed and converted to a series of “small pieces loosely joined.” In practice this implies redistribution of data to interactive blogs, wikis and content management systems. The functionality of Web 2.0 services can be achieved with little expense due to the prevalent availability of open source software such as Mediawiki for wikis and MovableType for weblogs. Content management systems should be designed to be modular, offering distinct modules for different content types. This result of this latter design feature “is that content is reusable throughout the site.” Modular data can also be recombined and transfigured to aid in the formation and work of collaborative projects. This methodology allows for new module classes to be added without having to change the underlying foundation of the website, enabling long term viability and expansion.

Coombs thirdly argues for the benefits of the perpetual beta. This notion “embraces change and creates an environment where systems are deployed early so that iterative and constant improvements can be made.” An important component of this design decision is to survey the end-user population to determine which additional systems to develop as well as making them a part of the development process. Feedback from the users is critical as it allows constant improvements to the digital services being offered.

Remixable content speaks to the ability of data to be reused in other applications. Essentially this involves offering an API (application programming interface) to users so that they can use the underlying technologies behind the library’s portal to support affiliated projects elsewhere. Coombs makes special note of how useful this can be across university systems, in which a perpetually updated API can be used as a foundation for department websites, database systems and data aggregation. The importance of remixable content speaks to the need of Web 2.0 developers to collaborate with other developers and with the end-user to ensure a rich user experience.

Coombs argues that another important Web 2.0 pillar applicable to Library 2.0 is the notion of user as contributor. The author performed an anecdotal survey, concluding that library staff members tended to feel that their websites needed to be more engaging and useful to the end-user. One way in which this can be affected is to “[provide] them with a space where they can create content and give feedback.” The author contends that most library websites do not provide this service to users. For the case of the particular library website which Coombs was active in redesigning (University of Houston) wikis were implemented for library instruction classes, a medium in which users are able to create and maintain content alongside librarians. Websites could be designed so that users could tag, review and catalog the collections. The author also expresses that users could contribute to site content itself, although special attention must be paid to the technical implementation of such a process so that security and integrity is upheld. Faculty archive repositories can be designed to provide a space where research materials can be contributed so that collaborative projects will take root organically.

Lastly Coombs stresses the importance of ensuring a rich user experience. Allowing users to contribute content and exposing them to an extensive variety of content are two ways to accomplish this. In addition interactive multimedia can be offered including podcasts and streaming video. Instant messaging and chat reference can be added to the website to offer additional ways in which the end-user can interact with librarians. Customized content can be offered through “subject-oriented portals,” without the risk of breaching user transaction confidentiality.

All of these methods are ways in which libraries can update their web presence in order to avoid losing their users to the Web 2.0 alternatives, most prominent of which is Wikipedia. If we librarians are able to adopt the technologies of the now while wielding the wisdom and expertise of the past, and open our structures up to the end-user rather than remain rigid and centralized in exclusion, we may flourish.

Discussion

Ultimately the various topics examined in this paper all point to a general need to reconsider the place of libraries and the librarian in this new age of information. While libraries have taken measures to digitize, many institutions have not, or have done so in an incomplete and piecemeal fashion. Where institutions have digitized, they have done an insufficient job of branding and redirecting users to their valuable and unique resources. These conclusions are corroborated by an extensive 2005 Council of Library and Information Resources report.[44] As the report puts it: “What is the role of a library when users can obtain information from any location? And what does this role change mean for the creation and design of library space?”

These are questions that need to be addressed and lurking behind them are serious threats to the traditional services of the library. Libraries must continue to adapt or will become anachronistic institutions, relics from a bygone era which are overshadowed by the publicly preferred Web 2.0 technologies and standards of the now. The services of the past, heavily reliant on the professional and top-down design, are being thrust into obscurity by the collaborative standards of this new paradigm. Accordingly librarians must develop a sense of self through the flux, developing a clear identity and job role. That discussion is outside the domain of this paper, but it must be reiterated that this process of re-evaluation will be necessary given the new processes of information retrieval which now dominate the horizon. One solution is for the librarian to be the engineer and guide behind complex collaborative information retrieval systems, ensuring the healthy functioning of a more democratic and anarchic system. In the end the most successful will probably be those who can bridge the gap between Library 2.0 and classical librarianship, offering the best of innovative technology with the wisdom of our professionalism.

References

Abel, D. (2009, September 4). Welcome to the library. Say goodbye to the books. The Boston

Globe. Retrieved from http://www.boston.com/news/local/massachusetts/articles/2009/09/04/a_library_without_the_books/

Anderson, C. (2004). The Long Tail. Wired, 12 (10). Retrieved from

http://www.wired.com/wired/archive/12.10/tail.html

Applegate, R. (2008). Whose Decline? Which Academic Libraries are “Deserted” in Terms of

Reference Transactions?. Reference and User Services Quarterly, 2 (48), 176-89.

Coombs, K. A. (2007). Building a Library Web Site on the Pillars of Web 2.0. Computers in

Libraries, 1 (27). Retrieved from http://infotoday.com/cilmag/jan07/Coombs.shtml

De Groote, S.L., & Dorsch, J.L. (2001). Online journals: impact on print journal usage. Bull Med

Libr Assoc, 89(4). Retrieved from http://www.ncbi.nlm.nih.gov/pmc/articles/PMC57966/

De Rosa, C. (2005). Perceptions of Libraries and Information Resources. Â Retrieved from

http://www.oclc.org/reports/2005perceptions.Htm

Dewan, S., Ganley, D., & Kreamer, K.L. (2009). Complementarities in the Diffusion of Personal

Computers and the Internet: Implications for the Global Digital Divide. Information Systems Research, 2009. Retrieved from http://isr.journal.informs.org/cgi/reprint/isre.1080.0206v1

Flintoff, J. (2007, June 3). Thinking is so over. Times Online. Retrieved from

http://technology.timesonline.co.uk/tol/news/tech_and_web/personal_tech/article187466

8.ece

Foudy, G., Johnson, T., Kaske, N., & Wendling, D. (2006). Â Is Google God? How Do Students

Look for Information Today? [PowerPoint Slides]. Retrieved from http://www.lib.umd.edu/MCK/foudyjohnson.ppt

Freeman, G.T. (2005). Library as Place: Rethinking Roles, Rethinking Space. Washington DC:

Council on Library and Information Resources.

Hafner, K. (2007, August 19). Seeing Corporate Fingerprints in Wikipedia Edits. The New York

Times. Retrieved from http://www.nytimes.com/2007/08/19/technology/19wikipedia.html

Head, A. J. (2007). Google: How do students conduct academic research?. First Monday, 8 (6).

Retrieved from http://firstmonday.org/htbin/cgiwrap/bin/ojs/index.php/fm/article/view/1998/1873

Holt, G.E. (2005). Beyond the pain: understanding and dealing with declining library funding.

The Bottom Line: Managing Library Finances, 18 (4), 185-190.

King, D.W. & Montgomery, C.H. (2002). After Migration to an Electronic Journal Collection:

Impact on Faculty and Doctoral Students. D-Lib Magazine, 8(12). Retrieved from

http://www.dlib.org/dlib/december02/king/12king.html

King, D.W., Tenopir, C., Montgomery, C.H., & Aerni, S.E. (2003). Patterns of Journal Use by

Faculty at Three Diverse Universities. D-lib Magazine, 9(10). Retrieved from http://www.dlib.org/dlib/october03/king/10king.html

Kleinz, T. (2005). World of Knowledge. Linux Magazine, 51. Retrieved from http://www.linux-

magazine.com/Issues/2005/51/World-of-Knowledge

Krause, C. (2009). Wikipedia.

Krause, C., & Matei, D. (2009). An Analysis of Management Methods and Institutional Design at

Patchogue Medford Public Library.

Leanhart, A., Horrrigan, J. & Fallows, D. Â (2004). Content Creation Online. Pew Internet &

American Life Project research report. Retrieved from http://www.pewinternet.org/pdfs/PIP_Content_Creation_Report.pdf

Leavesley, J. (2005). Talis White Paper: Project Silkworm. Retrieved from

http://www.talis.com/applications/downloads/white_papers/silkworm_paper_13_06_2005.pdf

Magnus, P.D. (2009). On Trusting WIKIPEDIA. Episteme, 6 (1). Retrieved from

http://www.britannica.com/bps/additionalcontent/18/36449903/On-Trusting-

WIKIPEDIA

Marks, P. (2005, September 22). Fears of another internet bubble. The Economist. Retrieved

from http://www.economist.com/business/displaystory.cfm?story_id=E1_QQNVDDS

McHenry, R. (2008). Wiki and Wikipedia: Overview. Caslon Analytics. Retrieved from

http://www.caslon.com.au/wikiprofile1.htm

Miller, P. (2005). Web 2.0: Building the New Library. Ariadne, 45. Retrieved from

http://www.ariadne.ac.uk/issue45/miller/

O’Reilly, T. (2002, July 18). Â Amazon Web Services API. Message posted to

http://www.oreillynet.com/pub/wlg/1707?wlg=yes

O’Reilly, T. (2007). What is Web 2.0: Design Patterns and Business Models for the Next

Generation of Software. Communications & Strategies, 1, p.17. Retrieved from http://ssrn.com/abstract=1008839

Potthast, M., Stein, B., Gerling, R. (2008). Automatic Vandalism Detection in Wikipedia.

Lecture Notes in Computer Science, 4956. Retrieved from http://www.springerlink.com/content/a457383n01w44653/

Raymond, E.S. (2000). The Cathedral and the Bazaar. Retrieved from

http://www.catb.org/~esr/writings/cathedral-bazaar/cathedral-bazaar/ar01s04.html

Rubin, R. (2004). Foundations of Library and Information Science. New York: Neal-Schuman

Publishers. Chapter 2.

Saini, A. (2008, May 14). Solving the web’s image problem. BBC News. Retrieved from

http://news.bbc.co.uk/2/hi/technology/7395751.stm

Sanger, L.M. (2009). The Fate of Expertise after WIKIPEDIA. Episteme, 6 (1). Retrieved from

http://www.eupjournals.com/doi/abs/10.3366/E1742360008000543

Sathe, N.A., Grady, J.L., & Giuse, N.B. (2002). Print versus electronic journals: a preliminary

investigation into the effect of journal format on research processes. J Med Libr Assoc, 90(2). Retrieved from http://www.ncbi.nlm.nih.gov/pmc/articles/PMC100770/

Scott, L. (Interviewer) & Berners-Lee, T. (Interviewee). (2006). developerWorks Interviews:

Tim Berners-Lee [Interview transcript]. Retrieved from IBM developerWorks Web site: http://www.ibm.com/developerworks/podcast/dwi/cm-int082206txt.html

Viégas, F.B., & Wattenberg, M., & Dave, K. (2004). Studying cooperation and conflict between

authors with history flow visualizations. In Proceedings of the SIGCHI Conference on

Human Factors in Computing Systems (Vienna, Austria, April 24 – 29, 2004). CHI ’04. ACM, New York, NY, 575-582. DOI= http://doi.acm.org/10.1145/985692.985765

Waldman, S. (2004, October 26). Who knows?. The Guardian. Retrieved from

http://www.guardian.co.uk/technology/2004/oct/26/g2.onlinesupplement

Willner, S. (2009, November 4). To Cut Costs, Library Unloads 95,000 Volume Duplicative

Collection. The Cornell Daily Sun. Retrieved from http://cornellsun.com/section/news/content/2009/11/04/cut-costs-library-unloads-95000-volume-duplicative-collection

Zimmer, M. (2008). Critical Perspectives on Web 2.0. First Monday, 13 (3). Retrieved from

http://www.uic.edu/htbin/cgiwrap/bin/ojs/index.php/fm/issue/view/263/showToc

Figure Captions

Figure 1. Web 2.0 diagram of related ideas. Courtesy: Tim O’Reilly.

Figure 2. Web 1.0 and Web 2.0 comparative services. Note the underlying themes behind the Web 2.0 services (see: Miller). Courtesy: Tim O’Reilly.

Figure 1

Figure 2

|

Web 1.0 |

|

Web 2.0 |

|

DoubleClick |

–> |

Google AdSense |

|

Ofoto |

–> |

Flickr |

|

Akamai |

–> |

BitTorrent |

|

mp3.com |

–> |

Napster |

|

Britannica Online |

–> |

Wikipedia |

|

personal websites |

–> |

blogging |

|

evite |

–> |

upcoming.org and EVDB |

|

domain name speculation |

–> |

search engine optimization |

|

page views |

–> |

cost per click |

|

screen scraping |

–> |

web services |

|

publishing |

–> |

participation |

|

content management systems |

–> |

wikis |

|

directories (taxonomy) |

–> |

tagging (“folksonomy”) |

|

stickiness |

–> |

syndication |

Endnotes

[1] O’Reilly (2007).

[2] Raymond.

[3] Miller.

[4] Ibid.

[5] King et al. (2003); King & Montgomery (2002).

[6] Coombs.

[7] Leavesley.

[8] Lenhart et al.

[9] “Web feeds | RSS | The Guardian | guardian.co.uk”, The Guardian, London, 2008, retrieved from  http://www.guardian.co.uk/webfeeds.

[10] Anderson.

[11] O’Reilly (2007).

[12] Scott et al.

[13] O’Reilly (2002)

[14] Zimmer.

[15] Marks.

[16] Flintoff etc.

[17] De Groote & Dorsch.

[18] Sathe et al.

[19] Applegate

[20] University of California Library Statistics 1991-2001.

[21] Willner.

[22] Abel.

[23] Foudy et al.

[24] Head.

[25] De Rosa.

[26] Holt.

[27] Truncation from Krause & Matei.

[28] Saini.

[29] Miller refers to the emptying of isolated “information silos” to create interconnected, universally accessible libraries.

[30] Rubin.

[31] Sanger.

[32] Dewan et al.

[33] Encyclopedia Britannica editor-in-chief referred to it as a “faith-based encyclopedia.” As quoted in McHenry.

[34] Viégas et al.

[35] Potthast et al.

[36] Kleinz.

[37] Waldman.

[38] Wikipedia’s semi-protection policy: http://en.wikipedia.org/wiki/Protection_policy#Semi-protection

[39] Reliable sources/citations requirements: http://en.wikipedia.org/wiki/Wikipedia:Reliable_sources

[40] Hafner.

[41] Magnus.

[42] For more see: Krause.

[43] Coombs.

[44] Freeman et al.